Last week, Google launched an alpha version of a new product called Google Container Engine (GKE). It’s a service that runs pre-packaged Docker images for you: You tell GKE about images you want to run (typically ones you’ve put in the Docker Registry, although there’s a also a hack to run private images) and how many instances you need. GKE will spin them up and make sure the right number is running at any given time.

The GKE Getting Started guide is long and complicated and has more JSON than you shake a stick at. I suspect that’s because the product is still alpha, and I hope the Google guys will improve both the CLI and web UIs. Anyway, below is a simpler guide showing how to stand up a stateless web site with just one Docker image type. I’m also including some analysis at the end of this post.

I’m using a Mono/ASP.NET vNext Docker image, but all you need to know is that it’s an image that exposes port 5004 and serves HTTP requests on that port. There’s nothing significant about port 5004 – if you want to try with an image that uses a different port, simply substitute as appropriate.

In the interest of brevity, the description below skips over many details. If you want more depth, then remember that GKE is Kubernetes-as-a-Service and check out the Kubernetes documentation and design docs.

Setup

- Go to the Google Developer Console and create a new project

- For that project, head into the “APIs” panel and make sure you have the “Google Container Engine API” enabled

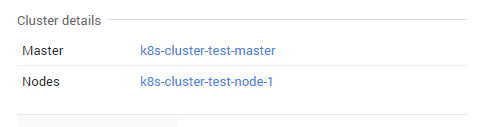

- In the “Compute” menu section, select “Container Engine” and create yourself a new “Cluster”. A cluster size of 1 and a small instance is fine for testing. This guide assumes cluster name “test-cluster” and region “us-central1-a”.

- Install the CLI and run

gcloud config set project PROJECT_ID (PROJECT_ID is from step 1)

Running raw Pod

The simplest (and not recommended) way to get something up and running is to start a Pod and connect to it directly with HTTP. This is roughly equivalent to starting an AWS EC2 instance and connecting to its external IP.

First step is to create a JSON-file somewhere on your system, let’s call it pod.json:

{

"id": "web",

"kind": "Pod",

"apiVersion": "v1beta1",

"desiredState": {

"manifest": {

"version": "v1beta2",

"containers": [

{

"name": "web",

"image": "friism/aspnet-web-sample-web",

"ports": [

{ "containerPort": 5004, "hostPort": 80 }

]

}

]

}

},

"labels": {

"name": "web"

}

}

What you should care about is the Docker image/repository getting run (friism/aspnet-web-sample-web) and the port mapping (the equivalent of docker run -p 80:5004). With that, we can tell GKE to start a pod for us:

$ gcloud preview container pods --cluster-name test-cluster --zone us-central1-a \

create web --config-file=/path/to/pod.json

...

ID Image(s) Host Labels Status

---------- ---------- ---------- ---------- ----------

web friism/aspnet-web-sample-web <unassigned> name=web Waiting

All the stuff before “create” is boilerplate and the rest is saying that we’re requesting a pod named “web” as specified in the JSON file.

Pods take a while to get going, probably because the Docker image has to be downloaded from Docker Hub. While it’s starting (and after), you can SSH into the instance that’s running your pod to see how it’s doing, eg. by running sudo docker ps. This is the SSH incantation:

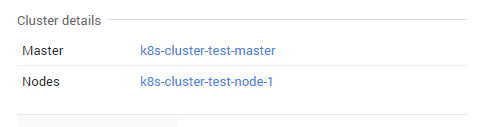

$ gcloud compute ssh --zone us-central1-a k8s-test-cluster-node-1

The instances are named k8s-<cluster-name>-node-1 and you can see them listed in the Web UI or with gcloud compute instances list. Wait for the pod to change status to “Running”:

$ gcloud preview container pods --cluster-name test-cluster --zone us-central1-a list

ID Image(s) Host Labels Status

---------- ---------- ---------- ---------- ----------

web friism/aspnet-web-sample-web k8s-<..>.internal/146.148.66.67 name=web Running

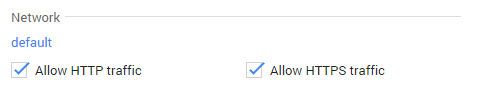

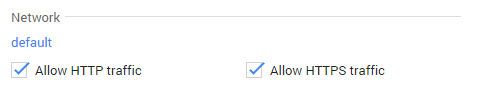

The final step is to open up for HTTP traffic to the Pod. This setting is available in the Web UI for the instance (eg. k8s-test-cluster-node-1). Also check that the network settings for the instance allow for TCP traffic on port 80.

And with that, your site should be responding on the external ephemeral IP address of the host running the pod.

As mentioned in the introduction, this is not a production setup. The Kubernetes service running the pod will do process management and restart Docker containers that die for any reason (to test this, try ssh’ing into your instance and docker-kill the container that’s running your site – a new one will quickly pop up). But your site will go down in case there’s a problem with the pod, for example. Read on for details on how to extend the setup to cover that failure mode.

Adding Replication Controller and Service

In this section, we’re going to get rid of the pod-only setup above and replace with a replication controller and a service fronted by a loadbalancer. If you’ve been following along, delete the pod created above to start with a clean slate (you can also start with a fresh cluster).

First step is to create a replication controller. You tell a replication controller what and how many pods you want running, and the controller then tries to make sure the correct formation is running at any given time. Here’s controller.json for our simple use case:

{

"id": "web",

"kind": "ReplicationController",

"apiVersion": "v1beta1",

"desiredState": {

"replicas": 1,

"replicaSelector": {"name": "web"},

"podTemplate": {

"desiredState": {

"manifest": {

"version": "v1beta1",

"id": "frontendController",

"containers": [{

"name": "web",

"image": "friism/aspnet-web-sample-mvc",

"ports": [{"containerPort": 5004, "hostPort": 80 }]

}]

}

},

"labels": { "name": "web" }

}},

"labels": {"name": "web"}

}

Notice how it’s similar to the pod configuration, except we’re specifying how many pod replicas the controller should try to have running. Create the controller:

$ gcloud preview container replicationcontrollers --cluster-name test-cluster \

create --zone us-central1-a --config-file /path/to/controller.json

...

ID Image(s) Selector Replicas

---------- ---------- ---------- ----------

web friism/aspnet-web-sample-mvc name=web 1

You can now query and see the controller spinning up the pods you requested. As above, this might take a while.

Now, let’s get a GKE service going. While individual pods come and go, services are permanent and define how pods of a specific kind can be accessed. Here’s service.json that’ll define how to access the pods that our controller is running:

{

"id": "myapp",

"selector": {

"app": "web"

},

"containerPort": 80,

"protocol": "TCP",

"port": 80,

"createExternalLoadBalancer": true

}

The important parts are selector which specifies that this service is about the pods labelled web above, and createExternalLoadBalancer which gets us a loadbalancer that we can use to access our site (instead of accessing the raw ephemeral node IP). Create the service:

$ gcloud preview container services --cluster-name test-cluster--zone us-central1-a create --config-file=/path/to/service.json

...

ID Labels Selector Port

---------- ---------- ---------- ----------

myapp app=web 80

At this point, you can go find your loadbalancer IP in the Web UI, it’s under Compute Engine -> Network load balancing. To actually see my site, I still had to tick the “Enable HTTP traffic” boxes for the Compute Engine node running the pod – I’m unsure whether that’s a bug or me being impatient. The loadbalancer IP is permanent and you can safely create DNS records and such pointing to it.

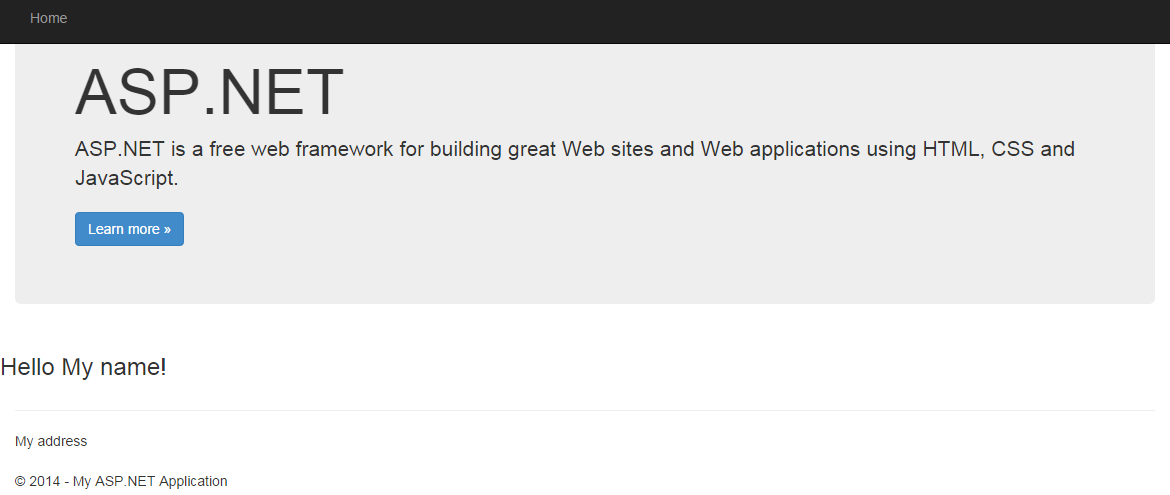

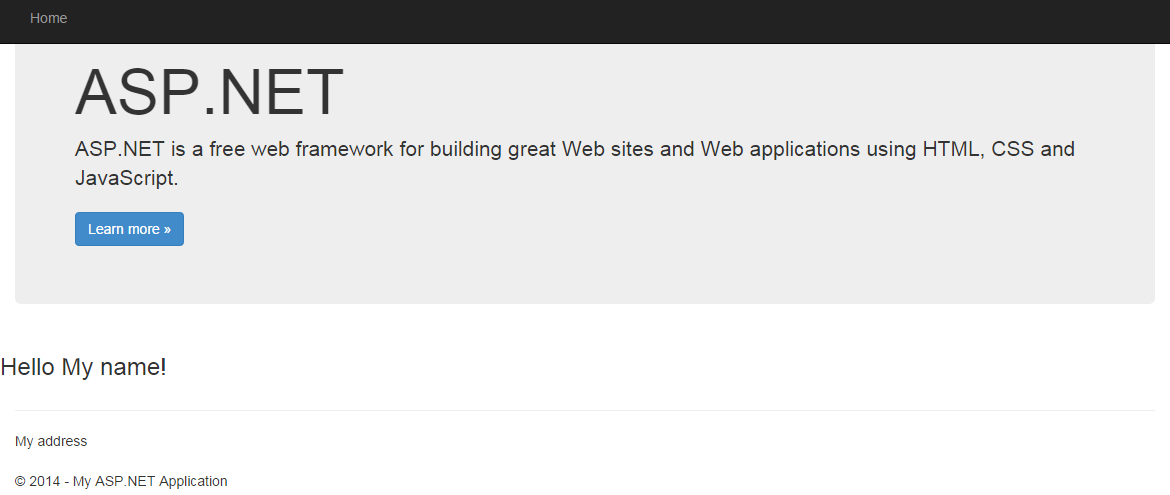

That’s it! Our stateless web app is now running on Google Container Engine. I don’t think the default Bootstrap ASP.NET MVC template has ever been such a welcome sight.

Analysis

Google Container Engine is still in alpha, so one shouldn’t draw any conclusions about the end-product yet (also note that I work for Heroku and we’re in the same space). Below are a few notes though.

Google Container Engine is “Kubernetes-as-a-Service”, and Kubernetes is currently exposed without any filter. Kubernetes is designed based on Google’s experience running containers at scale, and it may be that Kubernetes is (or is going to be) the best way to do that. It also has a huge mental model however – just look at all the stuff we had to do to launch and run a simple stateless web app. And while the abstractions (pods, replication controllers, services) may make sense for the operator of a fleet of containers, I don’t think they map well to the mental model of a developer just wanting to run code or Docker containers.

Also, even with all the work we did above, we’re not actually left with a managed and resilient capital-S Service. What Google did for us when the cluster was created, was simply to spin up a set of machines running Kubernetes. It’s still on you to make sure Kubernetes is running smoothly on those machines. As an example, a GKE cluster currently only has one Master node. This is the Kubernetes control plane node that accepts API input and schedules pods on the GCE instances that are Kubernetes minions. As far as I can determine, if that node dies, then pods will no longer get scheduled and re-started on your cluster. I suspect Google will add options for more fault-tolerant setups in the future, but it’s going to be interesting to see what operator-responsibility the consumer of GKE will have to take on vs. what Google will operate for you as a Service.